OWL-MCP

A Model-Context-Protocol server that enables AI assistants to create, edit, and manage Web Ontology Language (OWL) ontologies through function calls using OWL functional syntax.

README

OWL-MCP

OWL-MCP is a Model-Context-Protocol (MCP) server for working with Web Ontology Language (OWL) ontologies.

Quick Start

This walks you through using owl-mcp with Goose, but any MCP-enabled AI host will work.

Install Goose

You can use either the Desktop or CLI version of Goose from here:

Follow the instructions for setting up an LLM provider (Anthropic recommended)

Install OWL-MCP extension

You can either install directly from this link:

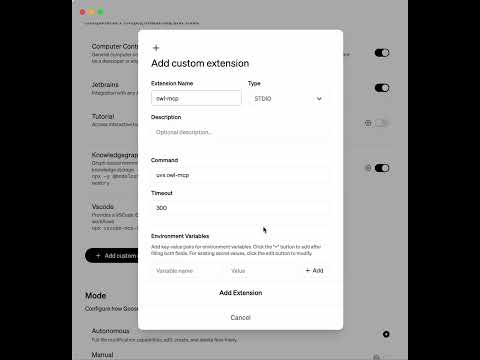

Or to do this manually, in the Extension section of Goose, add a new entry for owlmcp:

uvx owl-mcp

This video shows how to do this manually:

Try it out

You can ask to create an ontology, and add axioms to an ontology:

How this works

The MCP server provides function calls for finding, adding, or removing OWL axioms, using OWL functional syntax. Each function call is accompanied by the file path of the OWL file on your disk. Any format supported by py-horned-owl is accepted (we following OBO guidelines and recommend functional syntax for source).

The server takes care of keeping an instance of the ontology in memory and syncing it with disk. Any CRUD operation simultaneously updates the in-memory model and syncs this with disk. If you have Protege running, Protege will also sync with local disk, and show updates.

The server is well adapted for working with OBO-style ontologies - when OWL strings are sent back to the client, labels for opaque IDs are included after #s comments, as is common for obo-format.

Key Features

- MCP Server Integration: Connect AI assistants directly to OWL ontologies using the standardized Model-Context-Protocol

- Thread-safe operations: All ontology operations are thread-safe, making it suitable for multi-user environments

- File synchronization: Changes to the ontology file on disk are automatically detected and synchronized

- Event-based notifications: Register observers to be notified of changes to the ontology

- Simple string-based API: Work with OWL axioms as strings in functional syntax without dealing with complex object models

- Configuration system: Store and manage settings for frequently-used ontologies

- Label support: Access human-readable labels for entities with configurable annotation properties

Recommended Servers

playwright-mcp

A Model Context Protocol server that enables LLMs to interact with web pages through structured accessibility snapshots without requiring vision models or screenshots.

Magic Component Platform (MCP)

An AI-powered tool that generates modern UI components from natural language descriptions, integrating with popular IDEs to streamline UI development workflow.

Audiense Insights MCP Server

Enables interaction with Audiense Insights accounts via the Model Context Protocol, facilitating the extraction and analysis of marketing insights and audience data including demographics, behavior, and influencer engagement.

VeyraX MCP

Single MCP tool to connect all your favorite tools: Gmail, Calendar and 40 more.

graphlit-mcp-server

The Model Context Protocol (MCP) Server enables integration between MCP clients and the Graphlit service. Ingest anything from Slack to Gmail to podcast feeds, in addition to web crawling, into a Graphlit project - and then retrieve relevant contents from the MCP client.

Kagi MCP Server

An MCP server that integrates Kagi search capabilities with Claude AI, enabling Claude to perform real-time web searches when answering questions that require up-to-date information.

E2B

Using MCP to run code via e2b.

Qdrant Server

This repository is an example of how to create a MCP server for Qdrant, a vector search engine.

Neon Database

MCP server for interacting with Neon Management API and databases

Exa Search

A Model Context Protocol (MCP) server lets AI assistants like Claude use the Exa AI Search API for web searches. This setup allows AI models to get real-time web information in a safe and controlled way.