Interactive Feedback MCP

MCP server that enables human-in-the-loop workflow in AI-assisted development tools by allowing users to provide direct feedback to AI agents without consuming additional premium requests.

Tools

interactive_feedback

Request interactive feedback from the user

README

🗣️ Interactive Feedback MCP

Simple MCP Server to enable a human-in-the-loop workflow in AI-assisted development tools like Cursor, Cline and Windsurf. This server allows you to easily provide feedback directly to the AI agent, bridging the gap between AI and you.

Note: This server is designed to run locally alongside the MCP client (e.g., Claude Desktop, VS Code), as it needs direct access to the user's operating system to display notifications.

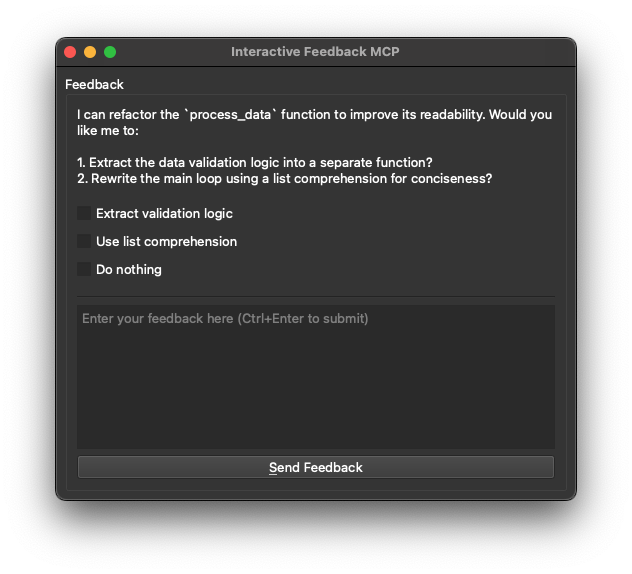

🖼️ Example

💡 Why Use This?

In environments like Cursor, every prompt you send to the LLM is treated as a distinct request — and each one counts against your monthly limit (e.g. 500 premium requests). This becomes inefficient when you're iterating on vague instructions or correcting misunderstood output, as each follow-up clarification triggers a full new request.

This MCP server introduces a workaround: it allows the model to pause and request clarification before finalizing the response. Instead of completing the request, the model triggers a tool call (interactive_feedback) that opens an interactive feedback window. You can then provide more detail or ask for changes — and the model continues the session, all within a single request.

Under the hood, it's just a clever use of tool calls to defer the completion of the request. Since tool calls don't count as separate premium interactions, you can loop through multiple feedback cycles without consuming additional requests.

Essentially, this helps your AI assistant ask for clarification instead of guessing, without wasting another request. That means fewer wrong answers, better performance, and less wasted API usage.

- 💰 Reduced Premium API Calls: Avoid wasting expensive API calls generating code based on guesswork.

- ✅ Fewer Errors: Clarification _before_ action means less incorrect code and wasted time.

- ⏱️ Faster Cycles: Quick confirmations beat debugging wrong guesses.

- 🎮 Better Collaboration: Turns one-way instructions into a dialogue, keeping you in control.

🛠️ Tools

This server exposes the following tool via the Model Context Protocol (MCP):

interactive_feedback: Asks the user a question and returns their answer. Can display predefined options.

📦 Installation

- Prerequisites:

- Python 3.11 or newer.

- uv (Python package manager). Install it with:

- Windows:

pip install uv - Linux:

curl -LsSf https://astral.sh/uv/install.sh | sh - macOS:

brew install uv

- Windows:

- Get the code:

- Clone this repository:

git clone https://github.com/pauoliva/interactive-feedback-mcp.git - Or download the source code.

- Clone this repository:

⚙️ Configuration

- Add the following configuration to your

claude_desktop_config.json(Claude Desktop) ormcp.json(Cursor): Remember to change the/path/to/interactive-feedback-mcppath to the actual path where you cloned the repository on your system.

{

"mcpServers": {

"interactive-feedback": {

"command": "uv",

"args": [

"--directory",

"/path/to/interactive-feedback-mcp",

"run",

"server.py"

],

"timeout": 600,

"autoApprove": [

"interactive_feedback"

]

}

}

}

- Add the following to the custom rules in your AI assistant (in Cursor Settings > Rules > User Rules):

If requirements or instructions are unclear use the tool interactive_feedback to ask clarifying questions to the user before proceeding, do not make assumptions. Whenever possible, present the user with predefined options through the interactive_feedback MCP tool to facilitate quick decisions.

Whenever you're about to complete a user request, call the interactive_feedback tool to request user feedback before ending the process. If the feedback is empty you can end the request and don't call the tool in loop.

This will ensure your AI assistant always uses this MCP server to request user feedback when the prompt is unclear and before marking the task as completed.

🙏 Acknowledgements

Developed by Fábio Ferreira (@fabiomlferreira).

Enhanced by Pau Oliva (@pof) with ideas from Tommy Tong's interactive-mcp.

Recommended Servers

playwright-mcp

A Model Context Protocol server that enables LLMs to interact with web pages through structured accessibility snapshots without requiring vision models or screenshots.

Magic Component Platform (MCP)

An AI-powered tool that generates modern UI components from natural language descriptions, integrating with popular IDEs to streamline UI development workflow.

Audiense Insights MCP Server

Enables interaction with Audiense Insights accounts via the Model Context Protocol, facilitating the extraction and analysis of marketing insights and audience data including demographics, behavior, and influencer engagement.

VeyraX MCP

Single MCP tool to connect all your favorite tools: Gmail, Calendar and 40 more.

graphlit-mcp-server

The Model Context Protocol (MCP) Server enables integration between MCP clients and the Graphlit service. Ingest anything from Slack to Gmail to podcast feeds, in addition to web crawling, into a Graphlit project - and then retrieve relevant contents from the MCP client.

Kagi MCP Server

An MCP server that integrates Kagi search capabilities with Claude AI, enabling Claude to perform real-time web searches when answering questions that require up-to-date information.

E2B

Using MCP to run code via e2b.

Neon Database

MCP server for interacting with Neon Management API and databases

Qdrant Server

This repository is an example of how to create a MCP server for Qdrant, a vector search engine.

Exa Search

A Model Context Protocol (MCP) server lets AI assistants like Claude use the Exa AI Search API for web searches. This setup allows AI models to get real-time web information in a safe and controlled way.